January 10, 2026

OpenAI just handed 300 million people a healthcare advisor that remembers their history, speaks their language, and is available instantly. The healthcare industry’s instinct is to dismiss this as “just another chatbot” or panic that “AI will replace doctors.”

Both reactions miss the actual strategic shift.

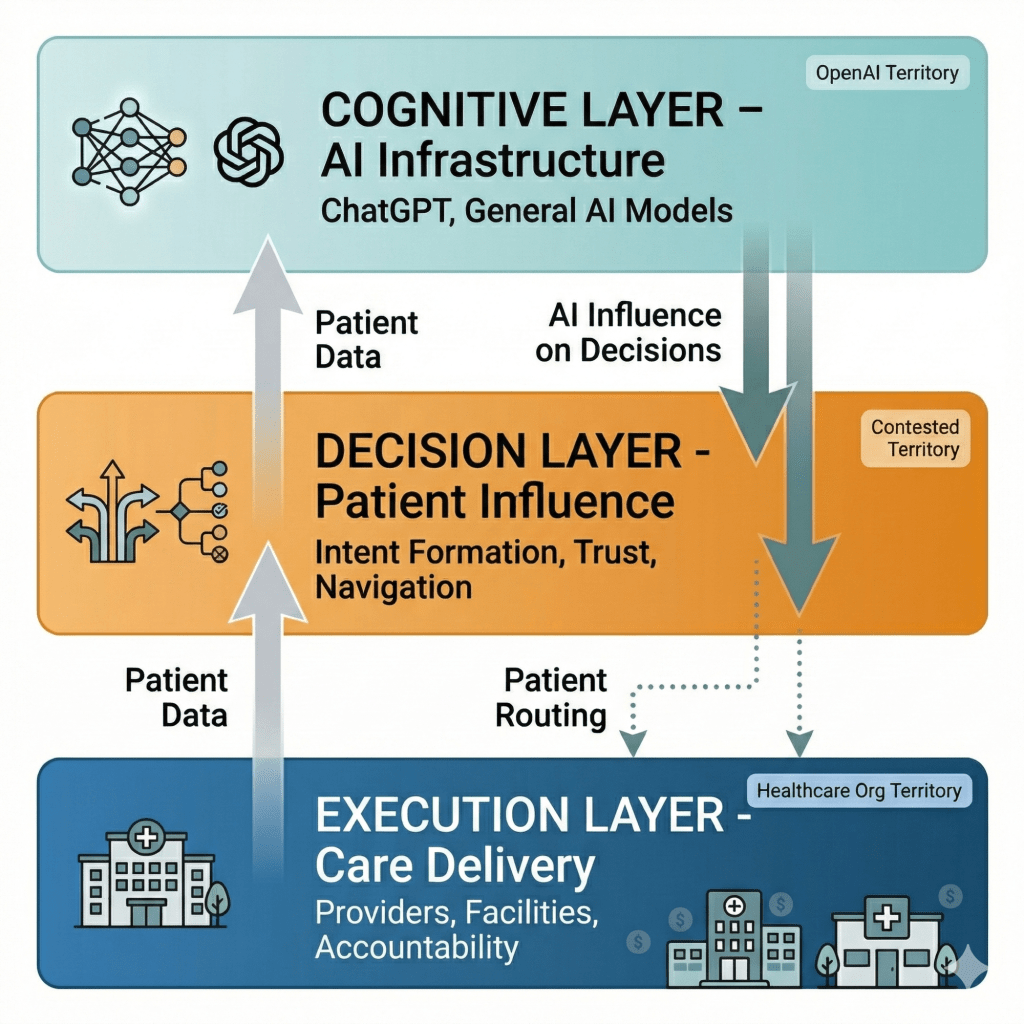

The thesis: ChatGPT won’t replace healthcare delivery—it will become the cognitive infrastructure layer that healthcare delivery depends on. This creates asymmetric power: OpenAI captures influence over healthcare decisions without bearing the costs, risks, or regulatory burdens of actually delivering care. Healthcare incumbents retain delivery capabilities but lose control over demand formation, patient relationships, and pricing power.

The strategic question isn’t “Will AI disrupt healthcare?” It’s “Which parts of healthcare can AI actually capture, which parts remain defensible, and how do you position your organization accordingly?”

To understand this, we need a framework.

Aggregation Theory and Why Healthcare Is Different

Ben Thompson’s aggregation theory explains how platforms like Google, Facebook, and Amazon accumulated power:

The classic pattern:

- Commoditize suppliers by giving consumers direct access to compare and choose

- Own the customer relationship through a superior interface

- Create network effects where more users attract more suppliers, which attracts more users

- Capture value through the platform layer while suppliers compete on the platform’s terms

Google didn’t create web content—but by controlling search, they controlled which content gets seen. Facebook didn’t create social content—but by controlling the feed, they determined what reaches audiences. Amazon didn’t manufacture most products—but by controlling e-commerce discovery, they shaped purchasing decisions.

The core insight: Control the demand side (users), and you can commoditize the supply side (businesses). The platform becomes more valuable than the underlying service providers.

This model works when:

- Demand is frequent and comparable (daily searches, social media checks, online shopping)

- Users can easily switch between suppliers (one click to a different website or product)

- The platform can route demand efficiently (search results, recommendations, listings)

- Marginal costs of serving more users approach zero (software scales cheaply)

Healthcare violates every assumption.

Health needs are episodic, not daily. A routine checkup happens once a year; most people interact with healthcare intermittently. When you do need care, the decision context varies wildly—emergency versus preventive, chronic versus acute, chosen versus required.

You can’t easily switch providers. Your insurance network is pre-determined by your employer. Your local hospital options are constrained by geography. Specialists require referrals. Switching your primary care physician means transferring years of medical history.

Routing demand is complicated by regulation, licensing, and physical constraints. An AI can recommend a cardiologist, but it can’t prescribe medications, order tests, or perform surgery. Only licensed entities in specific locations can actually deliver care.

Marginal costs don’t approach zero. Every patient interaction requires human clinicians, physical facilities, and expensive equipment. Healthcare doesn’t scale like software.

So aggregation theory doesn’t apply to healthcare… right?

Wrong. It applies differently.

ChatGPT won’t capture healthcare the way Google captured search or Amazon captured e-commerce. But it will capture something more subtle and potentially more valuable: the intent formation layer, the trust accumulation layer, and the decision influence layer—even while healthcare delivery remains firmly in the hands of traditional providers.

Understanding this asymmetry is the key to strategy.

The Core Strategic Questions

If you’re a healthcare executive right now, you face three interrelated decisions:

Question 1: Do you make your clinical data accessible to ChatGPT and similar AI systems?

This isn’t a simple yes/no. It’s about how you make data available, what data you share, and what you get in return.

Share too freely, and you’re educating an AI that could route patients to competitors. Share too restrictively, and you’re invisible to the AI layer that’s becoming the front door for healthcare decision-making.

Question 2: Do you build your own vertical AI or integrate with general AI platforms?

Building proprietary AI requires $10M+ investment over years, deep technical talent, and data that’s genuinely differentiated. Most healthcare organizations don’t meet this bar.

But some do—and for them, building is the only defensible strategy. The question is: which category are you in?

Question 3: How do you redesign your organization for a world where patients arrive AI-educated?

This is less about technology and more about workflow, culture, incentives, and business models. Patients who’ve consulted AI before arriving at your office expect different interactions than patients who arrived confused and dependent on your expertise.

These three questions are interconnected. Your data sharing strategy affects whether building proprietary AI makes sense. Your build-vs-integrate decision affects how you redesign workflows. Your organizational readiness affects whether any AI strategy succeeds.

This essay provides a framework for answering all three—based on actual strategic position, not wishful thinking.

The Landscape: Where AI Captures Value and Where It Doesn’t

Health intent is episodic and heterogeneous. The same person is:

- A consumer (researching symptoms at 11pm)

- A patient (acute care with limited choice)

- A benefits member (constrained by insurance networks)

- A caregiver (navigating someone else’s care)

- An emergency case (no choice, no time)

Each context has completely different constraints, switching costs, and decision factors.

Where AI can capture demand:

- Ambiguous symptoms requiring triage

- Chronic condition management and monitoring

- Pre-visit preparation and post-visit follow-up

- Medication adherence and lifestyle coaching

- Care navigation when time permits

Where AI cannot capture demand:

- Emergency department visits (no time to consult AI)

- Inpatient admissions (physician-directed)

- Specialist referrals gated by insurance requirements

- Procedural care requiring specific facilities

- Narrow network plans with limited choice

- Court-ordered or involuntary care

The majority of healthcare spending happens in the second category. ChatGPT capturing the first category doesn’t automatically capture the revenue or power in the second.

But that framing misses something crucial: the first category is where all healthcare journeys begin. And controlling the beginning of the journey—even when you don’t control the destination—is extraordinarily valuable.

The Intent Formation Layer

Before someone decides they need a cardiologist, they wonder if their chest tightness is serious. Before they know they need emergency care, they’re uncertain about symptom severity. The AI shapes how people frame their health concerns—which fundamentally influences what happens next.

The trust gradient. Every health question answered correctly builds confidence. Every anxiety reduced builds loyalty. By the time someone needs serious care, they’ve had 10-20 positive interactions with ChatGPT. That accumulated trust influences specialist selection, treatment decisions, and care navigation even when the user thinks they’re choosing independently.

The preparation and follow-up loops. You can’t control the surgery. But you can control pre-surgical education, post-surgical monitoring, medication adherence, physical therapy compliance, and follow-up question answering. The actual procedure is 5% of the patient journey. The other 95% is now contestable.

The routine care steering layer. Yes, emergency care isn’t routeable. But the distinction between “I should go to urgent care” versus “I should wait and call my doctor Monday” versus “I should go to the ER” is absolutely routeable. That triage decision—made millions of times per day—determines network utilization patterns, specialist referrals, and downstream care cascades.

The Economics of Influence

Think about the economics differently:

A health system does $2 billion in annual revenue. Maybe $1.2 billion is truly non-routeable (emergency, inpatient, narrow network). But $800 million comes from:

- Specialist referrals that could have gone to competitors

- Imaging and lab orders that could have been done elsewhere

- Follow-up visits that could have been skipped or redirected

- Preventive care that could have been ignored

- Chronic disease management that could have defaulted to other systems

If ChatGPT influences even 10% of that routeable volume—shifting $80 million in revenue—that’s meaningful platform power. Not winner-take-all dominance. But significant leverage.

The refined analogy:

ChatGPT doesn’t control reservations, seating, or kitchens. But what if it controls:

- Which restaurants appear in your consideration set

- How you interpret menu descriptions

- Whether you make a reservation at all versus cooking at home

- Post-meal satisfaction assessment that influences future choices

- The questions you ask your server and how you evaluate answers

Suddenly the platform that “just” helps with browsing has shaped the entire dining industry’s demand curve—even though it takes no transaction fee and doesn’t operate a single kitchen.

The strategic implication:

The Tier 1 categories—where AI captures clear value—represent maybe 15-25% of total healthcare spending. But they represent 50-70% of healthcare decision moments.

Controlling decision moments without controlling delivery creates asymmetric leverage. You don’t need to own the hospital to influence which hospital someone chooses. You don’t need to employ physicians to shape how patients evaluate physician recommendations. You don’t need to process claims to affect utilization patterns.

This is why OpenAI’s move is genuinely threatening—not because they’ll replace healthcare delivery, but because they’ll become the cognitive infrastructure layer that healthcare delivery depends on.

When patients arrive at your office having already consulted ChatGPT, your clinical autonomy hasn’t disappeared—but it’s now being evaluated against an AI baseline the patient trusts. When referral decisions are being shaped by AI guidance patients received before the appointment, your network isn’t obsolete—but your ability to direct flow has weakened.

The power shift is real. It’s just more subtle than “AI takes all the revenue.” It’s “AI reshapes the demand curve, influences care-seeking behavior, and captures the relationship layer—while healthcare companies remain essential for delivery but lose pricing power and flow control.”

That’s actually more dangerous than direct competition. Because there’s no clear defensive move when your competitor isn’t replacing you—just making you progressively more substitutable.

The Data Custody Illusion: Why HIPAA Doesn’t Protect You

The simple narrative goes like this: ChatGPT handles cognition, healthcare companies handle custody. Clean separation. ChatGPT can’t access protected health information (PHI), so healthcare incumbents are safe behind their HIPAA walls.

This is wrong on three levels.

Level 1: Users Will Share PHI Anyway

ChatGPT’s terms of service say “don’t share identifiable health information.” Users will ignore this completely.

People already tell ChatGPT:

- “I’m a 34-year-old woman with a history of endometriosis…”

- “My doctor prescribed me lisinopril but I’m worried about…”

- “My son has been diagnosed with autism and we’re trying to…”

- “I had a mastectomy last year and now my oncologist suggests…”

These are identifiable health disclosures. They’re voluntary. They’re happening right now at massive scale. And they’re creating a dataset of real health information outside HIPAA’s regulatory boundary.

The implication: OpenAI is accumulating real-world health data through voluntary user sharing—not anonymous aggregates, but specific clinical scenarios with context. This data improves their models. It reveals gaps in care. It identifies patterns in patient concerns that health systems never see because they only interact with patients during episodes of care.

ChatGPT knows what health questions people have at 2am that they never ask their doctor. That’s strategic intelligence healthcare companies don’t have access to.

Level 2: Healthcare Organizations Will Create Compliance Exposure

The temptation will be overwhelming: “Let’s integrate ChatGPT into our clinical workflow to save time.”

What this actually means:

- A nurse uses ChatGPT to help draft patient education materials (copying in patient details for context)

- A physician uses it to summarize complex cases (pasting in clinical notes)

- An administrator uses it to analyze utilization patterns (uploading de-identified data that’s re-identifiable)

- A care coordinator uses it to draft referral letters (including patient history)

Each of these creates covered entity liability. The moment a healthcare organization employee uses ChatGPT in their professional workflow with any identifiable information—even accidentally, even in good faith—they’ve potentially violated HIPAA.

The safeguards that prevent this:

- Employee training (routinely ignored)

- Technical controls (easily bypassed)

- Policy enforcement (requires monitoring that most organizations lack)

- Business Associate Agreements (which OpenAI may not sign for general ChatGPT use)

Integration patterns that actually work:

✓ Patient-mediated sharing: User exports their own data from patient portal, shares voluntarily with ChatGPT. Healthcare organization has no involvement. User bears the privacy risk.

✓ De-identified summaries: Strip all identifiers (names, dates, locations, rare diagnoses) before any external processing. This is harder than it sounds—ZIP codes, ages, and rare conditions can re-identify patients.

✓ On-device processing: AI runs locally on user’s device, nothing transmitted. Computationally expensive, limits model sophistication, but genuinely private.

✓ Strict PHI redaction pipelines: Automated detection and removal of 18 HIPAA identifiers before API calls. Requires technical sophistication most organizations lack.

✓ BAA-covered integrations: Formal Business Associate Agreement with audit trails, minimum necessary access, and breach notification. OpenAI would need to agree to these terms for specific partnerships.

Integration patterns that will fail (but organizations will try anyway):

✗ “We’ll just anonymize it”: Re-identification attacks are well-documented. Combinations of quasi-identifiers (age + diagnosis + procedure date) often uniquely identify individuals.

✗ “It’s for internal use only”: Doesn’t matter. If a covered entity shares PHI with an external system without authorization or BAA, it’s a violation.

✗ “We trust our employees not to share PHI”: They will. Not maliciously—out of convenience, time pressure, and genuine desire to help patients.

✗ “Patients consent when they sign our general forms”: HIPAA requires specific authorization for uses beyond treatment, payment, and healthcare operations. General AI processing likely doesn’t qualify.

Level 3: The First Major Breach Will Change Everything

Right now, we’re in the permissive phase. Experimentation is happening. Guidance is vague. Enforcement is absent.

The first time:

- A patient’s confidential health information shows up in ChatGPT’s training data

- A hospital employee’s use of ChatGPT leaks patient records

- An AI hallucination causes patient harm and discovery reveals improper PHI sharing

- A bad actor exploits healthcare organization’s AI integration to access PHI

…the regulatory response will be swift and severe.

OCR (Office for Civil Rights) will issue guidance. Fines will be levied. Healthcare organizations will panic-implement blocks on AI tools. The integration pathway will become far more constrained.

This isn’t hypothetical risk. This is certain future reality. The only question is timing.

What This Actually Means Strategically

The HIPAA boundary isn’t protecting healthcare companies—it’s creating a false sense of security.

The real dynamics:

- Consumer data flows to AI anyway (voluntary sharing outside HIPAA)

- Healthcare organizations face asymmetric risk (liable for breaches, but don’t control the platform)

- Integration requires sophisticated compliance infrastructure (most organizations don’t have this)

- Regulatory clarity will come after the first disaster (by definition, too late for whoever causes it)

Healthcare companies that think “HIPAA protects us” are misunderstanding the game. The question isn’t whether data flows to AI—it already does. The question is whether you participate in that flow in a way that creates strategic advantage versus just creates liability exposure.

The Harm-at-Scale Problem

Media aggregators tolerate error rates because the consequences are minimal. A bad restaurant recommendation ruins dinner. A bad health recommendation can kill someone.

The second-order effects no one is modeling:

Over-triage amplification: If AI slightly increases anxiety and encourages ER visits for low-acuity symptoms, you multiply unnecessary care across millions of users. Emergency departments can’t absorb a 10% volume increase. Costs spike. Wait times explode. Actual emergencies get delayed.

Under-triage false reassurance: If AI provides false comfort that delays necessary care, you create harm that manifests months later—harder to trace, harder to attribute, but real. “ChatGPT said it was probably nothing” becomes a legal liability for someone.

Defensive medicine acceleration: When patients arrive with AI-generated differential diagnoses, clinicians order more tests to rule out AI-suggested possibilities—even low-probability ones—because the medicolegal risk of dismissing patient concerns has increased.

System load volatility: Healthcare capacity is relatively fixed in the short term. AI-driven demand shifts—even small ones—create bottlenecks in specialties, geographies, or procedure types that can’t quickly adjust supply.

This isn’t hypothetical. WebMD already increased health anxiety and unnecessary care-seeking at scale. AI will amplify these effects because it’s more persuasive, more accessible, and more trusted.

The Vertical Depth Paradox: When Rare Beats Common

Here’s where the strategic map gets interesting. While general AI commoditizes common medical knowledge, it creates a power vacuum in specialized domains.

ChatGPT can explain diabetes management because there are millions of diabetic patients and thousands of published studies. The training data is abundant. The clinical patterns are well-documented. The treatment protocols are standardized.

But what about:

- A rare genetic mutation seen in 200 patients worldwide

- An autoimmune condition with 15 published case studies

- A novel cancer subtype identified through genomic sequencing

- Medication interactions in patients with multiple rare conditions

- Long-term outcomes from experimental protocols

This is the rare disease moat—and it’s misunderstood.

The moat isn’t just “we have data ChatGPT doesn’t have.” The moat is:

1. Longitudinal Outcome Data That Doesn’t Exist in Literature

Academic publications lag reality by 2-5 years. By the time a treatment protocol is published, specialized centers have already iterated through multiple versions based on real-world outcomes.

A rare disease network treating 500 patients over 10 years with Protocol A, B, and C has learned:

- Protocol A works for genetic variant X but not Y

- Patients with biomarker Z respond better to Protocol C

- The combination of medications produces unexpected benefits in subgroup W

- Long-term side effects emerge at year 5 that weren’t visible in 2-year trials

None of this is in ChatGPT’s training data. It can’t be inferred from general medical knowledge. It only exists in the longitudinal records of specialized treatment centers.

2. Multi-Modal Integration Across Data Types

Rare diseases often require synthesizing:

- Whole genome sequencing (3 billion base pairs)

- Family pedigrees spanning generations

- Metabolic pathway analysis

- Protein expression patterns

- Clinical symptoms across organ systems

- Treatment response over years

General AI can process each data type individually. Specialized AI can identify patterns across data types that predict outcomes. “Patients with this genetic variant + this metabolic marker + this symptom pattern respond to treatment X with 80% success rate versus 20% in the general population.”

That predictive capability doesn’t come from better algorithms—it comes from having all the data types on the same patients over time, which only exists in specialized registries.

3. Expert Clinical Judgment Encoded in Decision Trees

Rare disease specialists develop pattern recognition that isn’t written down anywhere. “When you see symptom combination A, check for condition B even though textbooks don’t link them—we’ve seen this correlation in our patient population.”

This tacit knowledge can be encoded in AI through:

- Analysis of specialist notes over thousands of cases

- Decision patterns from tumor boards and case conferences

- Treatment adjustments made by experienced clinicians

- Failure patterns that aren’t published (negative results rarely make journals)

A general AI trained on published literature doesn’t capture the clinical wisdom embedded in 20 years of specialized practice.

4. Network Effects in Rare Disease Communities

Patients with rare diseases find each other. They share experiences. They participate in registries. They enroll in trials. The organizations that coordinate these communities accumulate data and trust that general platforms cannot replicate.

But—and this is critical—data depth alone isn’t advantage.

When Vertical Depth Becomes Strategic Advantage

Rare disease expertise only translates to competitive moat when paired with:

Operational capacity to deliver care. Having the world’s best AI for a rare genetic condition means nothing if you can’t see patients for 6 months, don’t have the treatment protocols available, or lack the multidisciplinary team required for complex cases.

Payer alignment. Many rare disease treatments cost $500K-$2M annually. Without insurance coverage and prior authorization pathways, your AI recommendations are clinically sound but economically inaccessible.

Referral pathway control. Most rare disease patients need a diagnosis before they know they have a rare disease. If genetic counselors and specialists control the referral pathway, your AI needs to be embedded in their workflow—or you need direct-to-consumer access (which requires different capabilities entirely).

Regulatory clearance. If your AI is influencing treatment decisions, you may need FDA clearance as clinical decision support software. That’s a multi-year, multi-million dollar investment.

Examples of Vertical Depth + Strategic Advantage

Fred Hutchinson Cancer Center (oncology):

- Data: Decades of clinical trial data, genomic sequencing, treatment protocols, and patient outcomes for blood cancers and solid tumors

- Advantage: They know which patients with which molecular profiles respond to which therapies—knowledge that exists nowhere in published literature because trials are still ongoing or results aren’t yet published

- Moat: Integration of genomic data, tissue samples, treatment response, and long-term survival outcomes across modalities

- Capture: Direct patient relationships through NCI designation, clinical trial enrollment that generates ongoing data, research partnerships with pharma that fund the infrastructure

Memorial Sloan Kettering (oncology):

- Data: Treatment protocols and outcomes across hundreds of thousands of cancer cases, multi-disciplinary tumor board decisions

- Advantage: Institutional knowledge about rare cancer presentations, treatment complications, and long-term survivorship patterns

- Moat: Multi-specialty expertise (surgical oncology, medical oncology, radiation oncology, pathology, radiology) integrated in decision-making for complex cases

- Capture: Brand reputation that drives referrals, insurance center-of-excellence designations, physician training programs that create referral networks

GeneDx (genomics):

- Data: Whole genome sequences plus phenotype data on 1M+ patients with rare and undiagnosed conditions

- Advantage: They don’t just interpret your genome—they compare it to patterns from millions of patients with outcomes data, identifying variants of uncertain significance through population-level analysis

- Moat: Multi-modal integration (genetic + clinical + family history + developmental milestones) that identifies patterns invisible to general AI

- Capture: Direct relationships with genetic counselors, integration with clinical workflows, outcomes tracking that improves interpretation accuracy over time

Talkspace (mental health):

- Data: 10+ million therapy hours with conversational analysis, treatment matching outcomes, symptom progression tracking

- Advantage: Semantic patterns in therapeutic conversations that predict treatment response, therapist-patient fit, and risk escalation—patterns that only emerge through actual therapeutic relationships

- Moat: Longitudinal behavioral data from real therapy sessions, not survey responses or intake forms

- Capture: They provide both the AI-informed matching/support AND the therapy delivery—closed loop where every session improves the model and every model improvement enhances matching

Tempus (oncology):

- Data: Genomic + imaging + pathology + treatment response on 100K+ cancer patients across dozens of cancer types

- Advantage: Multi-modal treatment response prediction for specific cancer subtypes that accounts for genetic, molecular, and clinical factors

- Moat: Integration across data types that only exists in their clinical partnerships—they’re embedded in care delivery, not just analyzing historical data

- Capture: Clinical partnerships with major cancer centers, tumor board integration, pharma relationships for clinical trial matching, payer contracts for treatment selection support

Counter-Examples Where Vertical Depth Fails

Rare disease registry without treatment capacity:

You have longitudinal data on 500 patients with a rare metabolic disorder. You’ve identified treatment response patterns. But you can’t see patients—you’re a research consortium, not a clinical provider.

Result: Patients get diagnosed, get educated by your AI, then get referred elsewhere for treatment. You captured information value but not economic value.

Specialty AI without insurance coverage:

You built the world’s best AI for predicting response to a rare disease therapy. The therapy costs $800K annually. Insurance denies 60% of requests.

Result: Your AI recommendations are correct but unaffordable. Patients get educated then frustrated. No path to treatment means no path to revenue.

Vertical knowledge without workflow integration:

Your cancer genomics AI is more accurate than general models. But it requires clinicians to leave their EHR, manually input patient data into your separate system, then copy results back into clinical notes.

Result: Adoption fails despite technical superiority. Physicians don’t have time for multi-step workflows. Your AI gathers dust while inferior but integrated solutions win.

Data depth in a declining specialty:

You have the world’s most comprehensive database on a surgical procedure that’s being replaced by less invasive alternatives.

Result: Your vertical depth is in a shrinking market. No amount of AI sophistication changes the fact that fewer patients need what you offer.

The rare disease moat is real—but only for organizations that understand it’s a data + delivery + economics moat, not just a data moat.

The Payer Counter-Aggregation

Here’s what most analysis misses: Payers are the natural counter-aggregator to consumer AI.

Payers already have:

- Longitudinal claims data across all providers

- Utilization management leverage (prior auth, step therapy)

- Network design that constrains patient choice

- Benefit design that shapes care-seeking behavior

- Direct employer relationships for 160 million Americans

Three Scenarios for AI Power

Scenario 1: AI as Consumer Advocate

ChatGPT helps users navigate to the most appropriate, cost-effective care. Patients get educated about options. They question unnecessary tests. They seek second opinions. They optimize within their insurance constraints.

Payer impact: Reduced waste benefits them, but they lose information asymmetry and control. Educated patients demand better service, question denials, and switch plans when dissatisfied.

Provider impact: Volume-based providers lose unnecessary utilization. Value-based providers benefit from patients who engage more effectively.

Likelihood: This is the narrative OpenAI promotes, but payer incentives don’t fully align. Expect friction and counter-moves.

Scenario 2: AI as Payer Gatekeeper

UnitedHealth, Cigna, or Anthem builds (or acquires) their own AI layer that “helps” members but primarily optimizes for cost containment. Prior authorization becomes conversational but remains restrictive. Denials get friendlier but not less frequent.

“Based on your symptoms and our clinical guidelines, we recommend trying physical therapy before approving the MRI. I’ve identified three in-network PTs near you and can schedule an appointment this week. If symptoms don’t improve after 6 weeks, we can revisit imaging.”

Payer impact: Strengthens utilization management while improving member satisfaction scores. AI handles tier-one questions, escalates only when necessary.

Provider impact: Referral patterns get shaped by payer AI before patient even reaches clinical encounter. Fighting payer recommendations becomes harder when they’re personalized and evidence-based.

Likelihood: High. This aligns with payer incentives and existing infrastructure. Watch for acquisitions of health navigation AI companies and integration into member portals.

Scenario 3: Employer-Sponsored AI

Large employers deploy AI health navigation as a benefit, integrated with their specific plan design. This creates proprietary aggregation—the AI knows your coverage, your network, your out-of-pocket status, and routes accordingly.

Microsoft offers employees an AI health assistant that understands Microsoft’s health plan, negotiated rates, HSA balance, and network. It routes to Microsoft-preferred providers (better negotiated rates) and helps employees maximize their benefits (suggesting generic prescriptions, in-network specialists, preventive care to avoid deductibles).

Employer impact: Better healthcare navigation reduces total cost of care. Improved employee satisfaction. Data insights help negotiate better rates.

Provider impact: Large employers with customized AI navigation create fragmented demand. You’re either in the preferred network or losing volume.

Likelihood: Medium to High for Fortune 500 companies. Creates fragmentation, not consolidation, in healthcare AI landscape.

The strategic implication: The power shift might not be “ChatGPT over incumbents.” It might be “payers + AI over providers” or “employers + AI reshaping insurance.”

This fundamentally changes the defensive strategy. If AI becomes the enforcement mechanism for utilization management rather than the liberation tool for consumer choice, some incumbents strengthen rather than weaken.

The Incentive Misalignment Problem

“Just shift to value-based care” sounds simple until you map the actual transition requirements.

Most providers still operate in mixed models:

- 60% fee-for-service revenue

- 30% value-based contracts with weak accountability

- 10% true capitation or shared savings

For these organizations, AI-driven prevention or reduced utilization is financially harmful unless:

- Contract structures change to reward outcomes, not volume

- They have population health management capabilities

- They can bear actuarial risk (requiring reserves, analytics, care management infrastructure)

- They can manage multi-year transition periods with revenue volatility

Who can actually make this transition:

- Large integrated delivery systems with insurance arms (Kaiser, Geisinger)

- ACOs with mature value-based arrangements and shared savings experience

- Primary care groups with strong risk contracts and care management infrastructure

- Medicare Advantage-focused providers with experience managing populations

Who cannot:

- Independent specialists paid fee-for-service (orthopedics, cardiology, gastroenterology)

- Procedure-focused practices (surgery centers, imaging centers, infusion clinics)

- Rural hospitals operating on 2-3% margins with no room for revenue volatility

- Community providers without scale to invest in population health infrastructure

The result: strategic fragmentation. Some parts of healthcare embrace AI as an orchestration tool because it improves their margins. Others resist AI adoption because their business model requires volume preservation.

This creates exploitable arbitrage for new entrants and sophisticated incumbents. Organizations that successfully transition to value-based care can leverage AI aggressively. Those stuck in fee-for-service must fight AI adoption—creating internal resistance that accelerates their competitive decline.

The Structural Moats No One Discusses

Consumer trust might accrue to the interface. But healthcare has switching costs that interface capture cannot overcome:

Network inclusion: Your insurance only covers certain providers. AI can educate you about the “best” cardiologist in the region, but if they’re out-of-network and visits cost $500 out-of-pocket versus $30 in-network, you’re choosing based on economics, not AI recommendation.

This is especially powerful in narrow network plans (often 30-40% lower premiums) where choice is intentionally constrained to 20-30% of local providers.

Geographic constraints: You can’t teleport to the “best” hospital. For most Americans, there are 2-3 health systems within reasonable driving distance. In rural areas, there’s often just one. Interface competition doesn’t change local monopolies or duopolies.

Credentialing and licensing: Only licensed entities can prescribe medications, order imaging, perform procedures, or bill insurance. AI has zero licensing. This regulatory moat is absolute for certain activities.

A patient can be perfectly educated by AI about needing an MRI, but they still need a physician order. The AI can explain treatment options, but only a licensed provider can prescribe. This creates mandatory touchpoints that AI cannot bypass.

Existing care relationships: “My doctor” means something in healthcare that “my search engine” does not. Relationship stickiness is real, especially for:

- Chronic conditions requiring longitudinal management (diabetes, hypertension, autoimmune diseases)

- Behavioral health where therapeutic alliance matters

- Complex cases where the physician knows your history deeply

- Elderly patients with multiple comorbidities

Switching costs are high when trust has been built over years of interactions.

Employer selection: 160 million Americans get insurance through employers who pre-select 2-4 plan options with specific networks. Individuals have limited choice within those constraints.

Even the best AI navigation can’t overcome contractual limitations. If your employer’s health plan doesn’t include a specific hospital system, no amount of AI recommendation changes that.

Regulatory requirements: Certificate of Need laws (35 states) limit where hospitals and imaging centers can open. State licensing creates geographic monopolies for certain services. FDA approvals determine which treatments are available.

You can’t AI your way around the fact that there’s one cardiac surgery center in your county, and it has a three-month waitlist.

These aren’t information problems. They’re structural constraints that persist regardless of interface innovation.

The implication: AI shifts power at the margins but doesn’t eliminate incumbent advantages. The question is how large the margins are—and for which types of care.

For routine primary care, chronic disease management, mental health, and specialist selection—the margins are large. For emergency care, inpatient admissions, procedures, and narrow network contexts—the margins are small.

Strategy depends on which type of care you deliver.

The EHR Chokepoint

If you want to talk Stratechery-level analysis, you must address the actual platform in provider workflow: the EHR.

Epic, Oracle Health (Cerner), and Meditech control:

- Clinical ordering and documentation (where physicians spend 50% of their time)

- Decision support integration points (where AI could insert itself)

- Billing and revenue cycle (how money flows)

- Data exchange standards (what data can leave the system)

- Interoperability APIs (how external systems connect)

Two-Sided Market Dynamics

Consumer side: ChatGPT can become the front door for patient questions, education, and navigation.

Clinician side: The EHR remains the workflow layer for care delivery—ordering labs, prescribing medications, documenting visits, billing.

Even if patients arrive AI-educated, if the clinician workflow doesn’t incorporate AI insights, the influence stops at education. The actual care delivery—ordering, prescribing, documenting, billing—happens in the EHR.

Patient: “ChatGPT recommended I ask about checking my HbA1c and considering metformin.”

Physician: “Okay, let me order that lab and we’ll discuss medication options.” [Orders in Epic, not ChatGPT]

The patient’s AI education influenced the conversation. But the EHR captured the transaction, the billing, and the follow-up workflow.

EHR vendors can:

- Build competing AI features natively (Epic is absolutely doing this—see Epic Cosmos with predictive models)

- Tax third-party integration through app marketplaces (Epic App Orchard charges fees and requires certification)

- Control data exchange standards (SMART on FHIR exists, but with proprietary extensions that limit functionality)

- Block or slow integration through IT governance (security reviews, BAAs, integration testing—12-18 month timelines)

- Leverage physician workflow lock-in (switching EHRs costs $50M-$200M for a large health system and takes 2-3 years)

Epic didn’t prevent Salesforce from disrupting CRM because enterprises owned their CRM data and workflows. But healthcare IT is far more regulated, clinically risky, and resistant to change.

Strategic implication: Consumer-facing AI might win the patient relationship. But clinical-facing AI must win the EHR vendor relationship—or build physician-facing tools so valuable that health systems demand integration.

These are different games requiring different capabilities. OpenAI has consumer distribution. Do they have enterprise healthcare IT relationships? Not yet.

The Unsexy Operational Prerequisites

“Data as an API” is elegant until you confront healthcare data reality:

Patient matching: The US has no universal patient identifier. Matching records across systems requires probabilistic algorithms that introduce 5-15% error rates.

When AI synthesizes data from your health system EHR, your wearable device, your pharmacy records, and your insurance claims—each uses different identifiers. Matching errors compound. “John Smith born 1975” might be three different people, or one person with inconsistent birth dates across systems.

Data quality: Healthcare data is notoriously messy:

- Missing data (60% of EHR fields have gaps)

- Inconsistent coding (ICD-10 has 70,000 codes, many misapplied)

- Delayed claims data (sometimes 90+ days lag between service and claim processing)

- Contradictory records (patient reports penicillin allergy, but EHR shows penicillin prescription filled last month)

AI trained on messy data produces messy outputs.

Provenance tracking: When AI synthesizes from multiple sources, you need to track: Who recorded this data point? When? Under what conditions? Has it been amended? Was it patient-reported or clinician-verified?

“Patient reports shortness of breath” (subjective, in patient portal) is different from “Oxygen saturation 88% on room air” (objective, measured in clinic). AI needs to weight these differently, which requires provenance metadata most systems don’t capture.

Consent management: HIPAA requires tracking patient consent for data sharing. When a patient revokes consent, data must be purged from downstream systems—including AI models that may have incorporated it.

Maintaining this at scale across systems is operationally complex. Most healthcare organizations lack the infrastructure.

Explainability: When AI makes a clinical recommendation, you need to trace back through data lineage to understand why.

Not just “the model said so” but “these three lab values from last month (source: LabCorp, ordered by Dr. Johnson) plus this imaging result from last year (source: Radiology Associates, read by Dr. Smith) plus this family history element (source: patient-reported in 2023 intake form) produced this recommendation.”

That level of explainability requires data infrastructure most healthcare organizations don’t have.

These aren’t technology problems that better algorithms solve. They’re operational problems requiring data governance, master data management, and process discipline.

The organizations that solve these unsexy problems create genuine defensive moats—not through AI superiority, but through operational excellence that makes AI deployment actually viable.

The Build vs. Integrate Decision Framework

Now we can answer the core questions with precision.

If you’re a healthcare provider, you face three interrelated strategic decisions:

Question 1: Do You Make Your Data Accessible to ChatGPT?

This isn’t binary. The real question is: What data do you share, through which mechanisms, and what do you get in return?

Make data too accessible:

- Risk: You’re training an AI that could route patients to competitors

- Risk: You’re creating compliance exposure if PHI leaks

- Risk: You lose control over how your clinical expertise is represented

Make data too restrictive:

- Risk: You’re invisible to the AI layer becoming the front door

- Risk: Patients get generic guidance instead of personalized recommendations based on your protocols

- Risk: Competitors who do integrate gain competitive advantage

The middle path—strategic data participation:

Share broadly:

- General clinical protocols and treatment guidelines (not differentiated)

- Quality metrics and outcome data (builds credibility)

- Service availability and scheduling (drives volume)

- Patient education materials (builds trust)

Share selectively with controls:

- De-identified outcomes data that demonstrates clinical excellence

- Decision support logic that can route appropriate patients to you

- Integration points that make scheduling/intake frictionless

Never share:

- Identified patient data without explicit consent and BAA

- Proprietary clinical protocols that represent competitive advantage

- Data that could train competitors’ AI systems

Get in return:

- Patient volume from AI-driven referrals

- Data on unmet patient needs (what questions are people asking at 2am?)

- Competitive intelligence (where are patients going when they don’t come to you?)

Question 2: Do You Build Proprietary AI or Integrate with General Platforms?

Build proprietary AI when you can answer YES to all five:

1. Do you have longitudinal outcomes data that doesn’t exist elsewhere?

Not just patient records—everyone has those. I mean: genotype-phenotype correlations over years, treatment response patterns in rare diseases, therapy outcomes with conversational data, multi-modal data integration (genomic + imaging + clinical + molecular).

If your data advantage is “we have more of the same kind of data,” you can’t build a defensible AI moat. If your data advantage is “we have different types of data, integrated in ways no one else has,” building makes sense.

2. Can you pair AI depth with operational capacity to deliver care?

AI recommendations must lead to care you can actually provide. Otherwise you’re just educating customers for competitors.

Do you have:

- Clinical capacity to absorb increased demand

- Multidisciplinary teams for complex cases

- Geographic reach to serve patients

- Care coordination infrastructure

3. Do you have payer alignment or direct-to-consumer access?

Your AI-recommended treatments need coverage pathways or self-pay economic models. Clinical correctness without economic viability fails.

Can you:

- Navigate prior authorization for recommended treatments

- Demonstrate cost-effectiveness to payers

- Sustain a direct-to-consumer model if insurance doesn’t cover

4. Can you absorb clinical liability and regulatory requirements?

When your AI influences treatment decisions, you’re accountable. This requires:

- Malpractice insurance for AI-assisted decisions

- FDA clearance strategy if your AI qualifies as medical device software

- Clinical governance (how do you validate the AI, monitor for errors, handle failures)

- Bias detection and fairness auditing

5. Does your data flywheel improve as you treat more patients?

Each patient interaction should make your AI smarter in ways that strengthen your moat. Network effects in data, not just scale effects.

Every patient treated generates data → Data improves model → Better model attracts more patients → More patients generate more data.

If this flywheel doesn’t exist, you’re just building expensive software without compounding advantages.

Examples where building makes sense: Organization Data Advantage Operational Capacity Economic Model Why Build Works

Fred Hutch Cancer Center Decades of clinical trial data, genomic sequencing, outcomes for blood cancers NCI designation, clinical trial infrastructure, multidisciplinary teams Insurance coverage for specialized cancer care, pharma partnerships Proprietary treatment protocols for rare leukemias that don’t exist in literature

Memorial Sloan Kettering Treatment protocols across hundreds of thousands of cancer cases, tumor board decisions Specialized cancer centers, surgical expertise, radiation oncology Center-of-excellence contracts, insurance coverage Multi-specialty decision-making patterns for complex oncology cases

GeneDx 1M+ whole genome sequences with phenotype data Partnerships with genetic counselors and specialists Insurance coverage for genetic testing, DTC genetic interpretation Variant interpretation through population-level pattern matching

Talkspace 10M+ therapy hours with conversational analysis Licensed therapists, therapy delivery infrastructure Insurance contracts, DTC subscription model Semantic patterns predicting treatment response and therapist-patient fit

Tempus Genomic + imaging + pathology + treatment response data Clinical partnerships embedding them in care delivery Pharma partnerships, payer contracts for decision support Multi-modal integration for treatment selection

Integrate with general AI when:

1. Your competitive advantage is care delivery, not data depth

You win on:

- Operational excellence and patient experience

- Geographic convenience

- Local market dominance

- Brand and reputation

- Care coordination capabilities

Building AI doesn’t strengthen these advantages.

2. You lack capital for sustained AI investment

$10M+ over 3-5 years is table stakes. Less produces pilot projects that never scale.

Budget reality check:

- AI talent: $300K-$500K per senior ML engineer

- Data infrastructure: $2M-$5M for data lakes, pipelines, governance

- Clinical validation: $1M-$3M for studies demonstrating safety and efficacy

- Regulatory: $500K-$2M for FDA clearance if required

- Ongoing operations: $2M-$5M annually for maintenance, monitoring, updates

If you can’t commit this level of investment, integration is smarter.

3. Your data isn’t differentiated from published literature

If ChatGPT can learn 90% of what you know from medical textbooks and journals, building proprietary AI is wasted effort.

Test: Would your AI recommendations differ from a general AI trained on medical literature? If not, you’re commodity.

4. You need to reduce patient friction, not build algorithmic moat

Sometimes the goal is just making it easier for patients to:

- Schedule appointments

- Navigate your services

- Understand their treatment plan

- Complete intake forms

Commodity AI solves these problems. Custom AI doesn’t add value.

5. Your value is orchestration and accountability, not cognition

If you win by:

- Coordinating complex care across specialists

- Maintaining regulatory compliance

- Bearing clinical risk and liability

- Managing long-term patient relationships

Let others build the AI. Your moat is execution.

Examples where integration makes sense:

- Community hospitals providing general acute care (integrate with general AI for triage and patient education, focus on operational excellence)

- Primary care networks managing common chronic conditions (use commodity AI for patient engagement, differentiate on care coordination)

- Ambulatory surgery centers focused on specific procedures (integrate AI for scheduling and pre-op education, compete on surgical outcomes and patient experience)

- Most specialist practices where clinical judgment matters but data volume is insufficient (use general AI for patient education, differentiate on expertise and outcomes)

Question 3: How Do You Redesign Your Organization?

This is the question most healthcare executives ignore…and it’s the most important.

AI changes more than technology. It changes:

- Workflows: Patients arrive educated with questions; staff need different conversation scripts

- Culture: From paternalistic (“I’ll tell you what you need”) to collaborative (“Let’s discuss options together”)

- Incentives: Value-based care becomes mandatory when AI helps patients navigate to appropriate care

- Business models: Volume-based revenue shrinks when patients optimize their care seeking

Concrete actions:

- Assess current patient touchpoints and AI impact (what gets better, what becomes obsolete)

- Establish AI governance at board level (not just IT committee)

- Train front-line staff for interactions with AI-educated patients

- Pilot integration with consumer AI for scheduling and patient education

- Build API infrastructure that makes your system easy for AI to integrate with

- Publish quality and outcome data in AI-ingestible formats

- Redesign workflows assuming patients arrive prepared

- Launch value-based care pilots where AI-driven efficiency benefits you

Long-term (1-3 years):

- Shift majority of contracts to value-based models, if it makes sense

- Build care management infrastructure for longitudinal relationships

- Develop vertical AI if you meet the five criteria

- Become indispensable execution partner for AI-mediated healthcare

Most organizations will fail at Question 3 even if they get Questions 1 and 2 right. Because organizational change is harder than technology decisions.

What Leaders Must Do Differently

Stop protecting portals. They’re destinations in a world that no longer navigates that way. Your patient portal with 8% utilization is already obsolete. Accept it.

Stop hoarding data. Data that cannot participate in consumer reasoning loses relevance. The value is in synthesizing data into action, not locking it away behind seven security layers and impossible consent forms.

Stop confusing intelligence with accountability. One scales cheaply through AI. The other requires humans, licenses, infrastructure, and risk-bearing—which doesn’t scale cheaply but also can’t be commoditized.

Start building for AI-mediated demand:

- API-first integration that makes you the easiest health system for AI to route to

- Real-time scheduling and capacity management that can respond to AI-driven demand shifts

- Transparent pricing and quality data that AI can surface to consumers

- Streamlined intake for AI-prepared patients who arrive educated

- Care team training for collaborative relationships with informed, empowered patients

Start redesigning financial models:

- Shift toward value-based contracts where AI-driven efficiency benefits you, not harms you

- Build population health capabilities to manage longitudinal patient relationships

- Develop care management infrastructure that turns episodic care into continuous engagement

Start establishing governance:

- Board-level AI strategy discussions (not just IT implementation updates)

- Clinical validation protocols for any AI touching patient care

- Bias detection and equity monitoring across AI-influenced pathways

- Clear accountability chains when AI-influenced care causes harm

- Transparency about AI use that builds rather than undermines trust

The Endgame

ChatGPT is becoming where health questions begin. Healthcare companies still control where care happens, how it happens, and who is responsible when things go wrong.

The gap between those two layers is where the next generation of healthcare infrastructure will be built.

Not by those with the best AI. Not by those with the most data. But by those who can orchestrate commodity intelligence into accountable care delivery—in a fragmented system with regulatory constraints, misaligned incentives, and operational complexity that software alone cannot solve.

But let’s be clear about the power dynamics:

OpenAI doesn’t need to control healthcare delivery to win. They just need to control intent formation, trust accumulation, and decision moment influence. That’s sufficient to reshape demand curves, affect utilization patterns, and capture enormous value—even while healthcare companies retain delivery capabilities.

Healthcare companies don’t need to build better AI to defend their position. They need to become indispensable execution partners for AI-mediated healthcare. That means seamless integration, operational excellence, clinical accountability, and genuine value delivery.

The few healthcare organizations with true vertical depth should absolutely build proprietary AI. But they must pair that AI with operational capacity, economic viability, and regulatory compliance. Data alone isn’t a moat.

The winners won’t own the front door. They won’t own the back office. They’ll own the integration layer that makes both sides valuable—the nervous system that turns AI cognition into health outcomes.

Healthcare isn’t being disrupted by AI. It’s being unbundled into cognition (which AI commoditizes), decision-making (which AI influences), and execution (which remains stubbornly expensive and local). Your strategy depends on which layers you can actually defend—and whether you have the organizational capacity to transform before someone else does.

Liat Ben-Zur is CEO of LBZ Advisory and serves on the boards of Talkspace, Compass Group, and Splashtop. She helps healthcare companies navigate strategy in an AI-native world—without the magical thinking.